Introduction

This is the first post on ninokroesen.com and ninokroesen.nl, so it is the one place on the site where I explain the thing rather than doing the thing. Future posts will go straight into the problem at hand — a production system misbehaving, a research run that came back with something interesting, or an independent project that needs an honest write-up. This post is the index: who is writing, what I am actually working on, and what the blog is going to cover once topical posts start landing.

The angle is operator-developer. I build software, I keep the infrastructure around it running, I run longer-term research on the side, and I ship small independent experiments of my own. That hybrid seat is the reason the posts here will read differently from pure engineering blogs or pure founder content. The tradeoffs in each post come from having to do more than one of those things at the same time, not from focusing on only one of them.

Honestly, the other reason this site exists is that writing posts like this one is the most useful reflective tool I have found for my own work. Sitting down to write this introduction has already forced me to dig back into what has actually worked on each of these projects, what has not, and what I should focus on next — the kind of honest evaluation that is easy to skip when you are heads-down on the next thing in the queue. That is part of what this blog is for. Plenty of the posts going forward are going to read more like working-out-loud than like a finished opinion, and that is by design rather than by accident.

Who’s writing this

I am Nino Kroesen. I am based in the Achterhoek region of the Netherlands, Dutch is my native language, and I write in both languages. Posts on this site are drafted in English first and then translated into Dutch, so the .com and .nl versions of the site stay in sync rather than running separate editorial streams.

My day-job role is Lead Developer at WorkWear4All B.V., a national Dutch workwear operation running a multi-store Magento 2 stack across several European markets. The systematic trading work on this blog is separate from the day-job — personal research I do in my own time, outside of WorkWear4All, and the posts about it will be written from that angle.

I work primarily on a MacBook Air, and I lean heavily on agentic coding tools for day-to-day development across every project listed below. I dabble across whatever is available at the moment rather than committing to a single one, and I am actively on the lookout for new ones that genuinely improve the workflow rather than getting in the way of it. For anything compute-heavy — and a fair bit of the AI research plus a decent chunk of the trading work falls into that bucket — the laptop is the wrong tool, so I rent compute in the cloud rather than trying to run the heavy runs locally. My suppliers at the moment are Netcup for general VPS and server work, Runpod for on-demand GPU rental when an AI experiment needs a card bigger than anything inside my own machine, and Google Colab for Jupyter notebooks that need a heavy-compute kernel without setting up a whole environment around them. That split — local for development, cloud for the compute-heavy runs — is the shape of the setup, and it will colour the posts where compute itself is what is holding something up.

What I’m working on right now

A few things in parallel. I am listing them here so that later posts can refer back to “the trading research stack” or “the WorkWear4All Magento cluster” without re-introducing each one every time.

WorkWear4All

WorkWear4All is a multi-store Magento 2 e-commerce operation — multiple storefronts (workwear4all.nl, workwear4all.be, workwear4all.de, workwear4all.com, workwear4all.pl and several more specialised sub-stores) sharing one backend, running on Apache + Varnish + PHP-FPM + Redis. The Apache choice is an early one that was made by an external developer before I came into the picture, and with hindsight it is one I regret — we are hitting enough performance friction that a migration to Nginx is already on the roadmap. I am lead developer on the platform, and I also coach the juniors and interns on the engineering side.

The interesting parts, from a writing perspective, are the operational ones. The _cl changelog tables that quietly balloon in the background until something in the indexing pipeline breaks. The indexer cron groups whose documented behaviour and real behaviour are subtly different. The multi-country MAGE_RUN_CODE plus locale plus Varnish VCL coordination that has to stay correct across all of these storefronts without anybody consciously thinking about it on any given day. More broadly, the Magento ecosystem is not a great fit for modern web development, and that colours a lot of the operational content on this blog. The issues we hit are frequently the kind that are hard to diagnose without reaching into adjacent components of the stack to rule them out, and fixing one thing often means understanding the three other things that all thought they were responsible for the same behaviour. The posts that come out of this part of the work come from this seat — not from reading the documentation, but from running into the edges of it in production. If a module is worth installing, I will say so and why; if it isn’t, I will say that too.

Hourly

Hourly is a free menu-bar macOS time tracker I am building in SwiftUI. Its project page lives at /hourly, with support at /hourly/support. The deliberate position is: no paywall, no in-app purchases, no subscription, no account, no cloud — storage is local to your Mac and stays there. The market reference points are WorkingHours, Toggl, and Timery, and every one of them sits behind some form of paywall on the Mac App Store. The point of difference for Hourly is that it does not have a business model that eventually asks you for your credit card.

The less obvious reason Hourly exists is that I am using it as an experiment. Generative AI has made it genuinely trivial to ship the kind of feature set that a small SaaS used to charge a monthly subscription for, and I want to find out — empirically — how much of a threat that actually is to the SaaS providers currently holding a category like this one. So I am launching Hourly as lean as I possibly can: no marketing spend, no growth team, no retention plumbing, just a free app that does the job well and a clean project page on this site. What I will actually be measuring is whether a decent free tool, built in a fraction of the time it would have taken two years ago, can move the needle on installs and attention in a category where every incumbent charges. What comes out of Hourly in writing is about the experiment itself — the product decisions behind staying free and local, what I chose not to build and why, and the adoption numbers once those exist and are worth talking about.

Systematic trading research

Outside of the day-job I run systematic trading research as a personal project in my own time. The current iteration is a systematic forex strategy talking to MetaTrader 5 through a Node.js broker bridge. It is in paper-trading validation, not live capital. There are sensible paper-trading risk controls in place — a circuit breaker calibrated against the backtested drawdown so live volatility has a hard ceiling before the strategy pauses itself for review. Beyond that, I am keeping the content on this system heavily redacted: no published performance numbers, no parameter values, no specific instrument lists, nothing that would let a reader reverse-engineer the strategy from what I write on this blog. That is a deliberate editorial rule, not modesty.

The main challenge on this project is not the trading part — it is the research part. The pipeline is computationally heavy, and generating a meaningful data set on a single strategy variant takes roughly a week to come back. That is a long feedback loop by the standards of any other software work I do, where I am used to changing something and seeing the effect in seconds or at most minutes. Sitting a week between hypothesis and result has been the hardest adjustment of the whole project, and it shapes the kind of experiments I am willing to run in the first place — expensive ones get fewer shots, and there is a real discipline required to not keep nudging things mid-run while the data is still coming in. What comes out of this part of the work in writing is engineering-first: the design of the research harness, the broker integration, and the statistical thinking that does the actual work of telling a real edge apart from a lucky backtest. I will not write “I made $X last month” posts, I will not publish live P&L, I will not sell signals, and I will not offer financial advice.

AI agent experiments

I also spend time on routed-model agentic systems, with mixed feelings about the results so far. The architecture I have been iterating on uses a small reasoning model (Nanbeige4-3B) as an orchestrator that hands off to larger models depending on task complexity — DeepSeek V3.2 for harder reasoning, a local R1-32B for workloads I want to keep private, and Claude Sonnet for Dutch-language customer copy.

The honest take is that while building the framework I kept catching myself curve-fitting the scaffolding to produce a specific response rather than doing the harder work of making the system actually reason through the task. That is not a satisfying place to land. On top of that, the operational friction was real — <think> block bloat eating context windows on long sessions, routing latency on the small orchestrator when it sat in the hot path, and the brittle context-engineering required to keep the stack coherent across a multi-step task. And the costs did not help either side of the setup. For sensitive workloads I fell back to commercial LLM APIs billed on a per-million-token basis and paid directly; for mid-sized self-hosted models I tried renting on Runpod and the bill racked up faster than the research output justified. Neither end of the spectrum was sustainable, and the project is on the backburner as a result.

I still think small models are where this ecosystem eventually has to go. The compute cost of the current frontier LLMs is not a sustainable base to build software on top of, and the recent improvements in small-model capability have been enough that I am planning to reboot the project. When I do, the focus is going to shift: less “a model that reasons on its own,” more “building good tools for an LLM to use.” That is a different problem from orchestration — it sits closer to traditional software engineering with an LLM as one of the callers — and from what I have seen so far it is a much more productive frame than trying to get a routed stack to carry a long task by itself. What comes out of this project in writing is about exactly that shift — what I tried, what did not work, and what the second attempt is built around.

What I’ll write about here

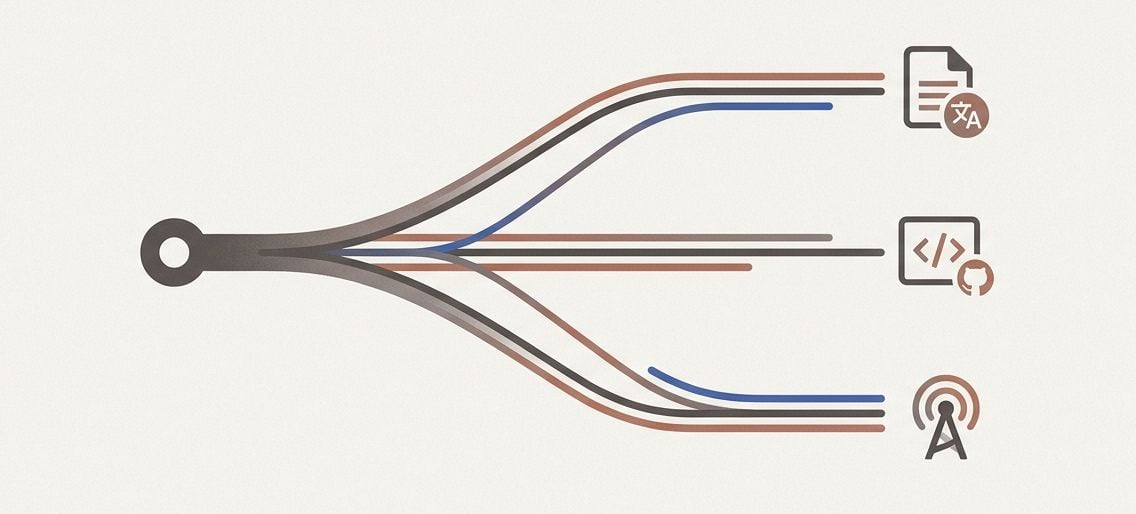

Three content pillars. Everything on this site is drafted in English first and then translated into Dutch, so every post that lands on .com gets a paired Dutch version on .nl. There is no separate Dutch-only editorial stream and no topic that lives on one domain and not the other.

The pillars are not about specific projects, because projects come and go on a site like this one. They describe the three modes of work that actually produce content worth writing down.

The first pillar is maintaining and building out production systems. The day-job shape of the work: a running platform with real users, real data, and a long list of operational edges that only show up once the system has been in use for a while. Posts in this pillar cover the infrastructure-level reality of keeping something like this alive and growing — background migrations, index and cache gotchas, performance debt you have inherited from earlier decisions, extension or dependency evaluations with honest tradeoffs, and the architectural choices you would make differently if you were starting from scratch today. Right now this pillar is fed mostly by the Magento stack at WorkWear4All, but the framing is general; anything that is a running system with real users is fair game.

The second pillar is researching ideas with data-science methods. Slower work, longer feedback loops, more patience. The trading research is the current example, but the pillar covers any project where the loop is: form a hypothesis, build a harness to test it, run the experiment, and interpret the output honestly. Posts here will be about the engineering of research — how to build a harness that does not quietly lie to you, how to think about sample sizes and statistical significance, how to design experiments when a single run takes days to come back, and how to separate a signal from a fit. No get-rich narratives. No signal-selling. Where the underlying work is commercially sensitive, the posts will be heavily redacted on anything that would let a reader reverse-engineer a specific strategy.

The third pillar is shipping and evaluating independent software experiments. Small products launched on deliberate hypotheses, with honest writeups of what happened — including the ones that did not pan out. Right now this pillar is fed by Hourly (which is itself set up as an experiment on what generative AI does to a paywalled SaaS category), a planned post-mortem on the webshop I launched and shut down, and whatever the AI-agent reboot turns into. The through-line is: ship the thing, measure the result, write it up without varnish, and move on. No launch-day hype on anything that has not actually been used yet.

Out of scope for this site, deliberately: general AI and LLM opinion pieces, personal-finance advice for non-entrepreneurs, consumer product reviews, political commentary, and meta-posts about blogging itself. That is a scope decision, so the site does not drift into whatever is trending. If a topic is here, it is because I am doing the thing, not because I have an opinion about the thing.

How to follow along

The site runs on two domains: ninokroesen.com for English, ninokroesen.nl for Dutch — same author, same backend, paired through hreflang. Posts are drafted in English and translated into Dutch, so the two domains stay in sync; whatever shows up on one has a counterpart on the other. My code lives at github.com/vultwo, which is where some of the tooling and libraries I reference in posts are published. RSS autodiscovery is wired into the layout, so any modern reader will pick up the feed without any extra work on your side. I may also add an opt-in email newsletter later for readers who prefer email over RSS; if I do, it will be low-frequency, and there will not be a modal popping up to ask for your address before you have even read anything.

If any of the work described above overlaps with something you are building, or there is an interesting project or question worth comparing notes on, my contact details are on the about page. I read what comes in.

Related reading

-

A research pipeline for the internet-shaped part of the decision

I built a 5-stage pipeline over 177K Dutch e-commerce domains to pick a niche. V1 picked one. The shop died in operations the pipeline couldn't see.

-

An agent built around not calling the LLM

A personal agent built so the default question every tick is whether the model needs to be called, and an architecture where the answer is usually no.

-

A diamond painting shop the code could not save

I built a custom Node webshop for personalized diamond paintings, sold one canvas, and shut it down inside a month. The supplier was the bottleneck.