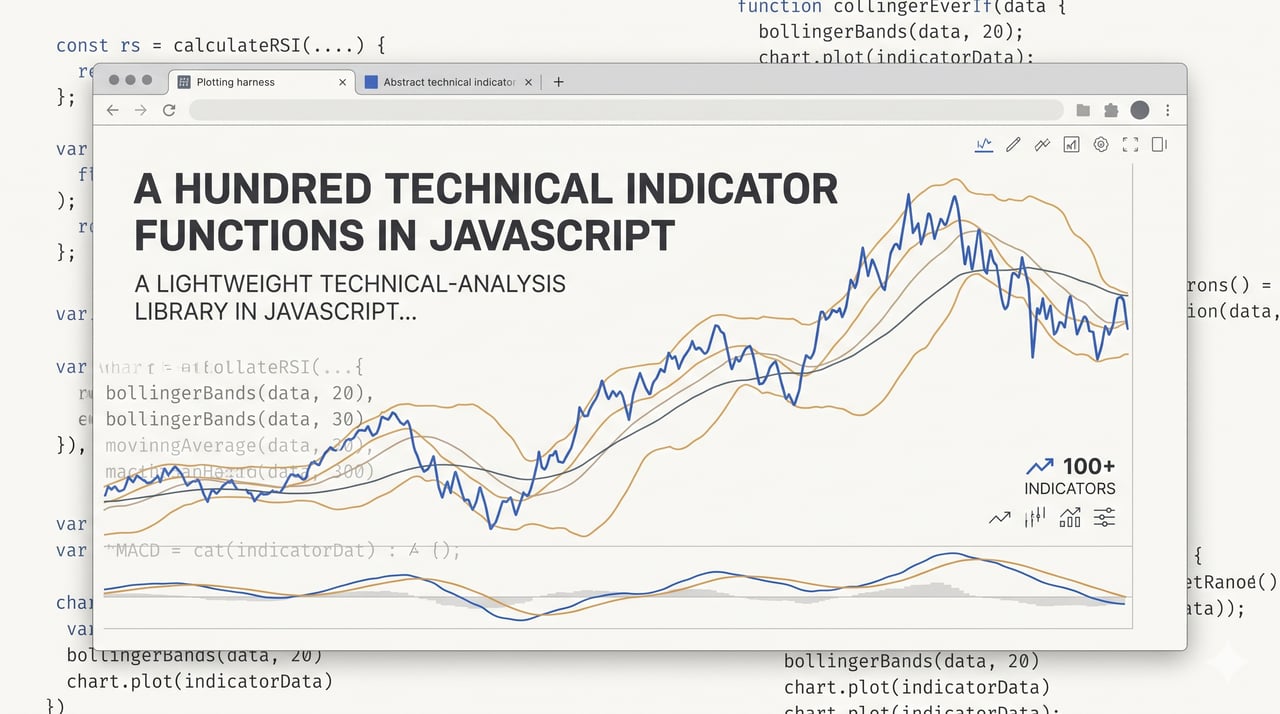

A hundred technical indicator functions in JavaScript

My first open-source project is a technical-analysis library called ta.js — around a hundred indicator functions in JavaScript, first commit on 2020-08-24, v1.17.0 shipped earlier this week. I started it because I needed the indicator functions for my own work anyway and writing each of them turned out to be the cleanest way to learn them. The first three days’ commits added Money Flow Index, Rate of Change, and On-Balance Volume; the RSI fix landed four days later; Stochastics two weeks in. The library was being built in parallel with me working through the textbook. I published it because if anyone actually used it, they might open issues on the ones I had gotten wrong, and that scrutiny loop was cheaper than anything I could have built for myself.

The honest read of the thing five and a half years later: I built my first open-source project in JavaScript because I didn’t yet know how much execution speed matters in quantitative systems — the language turned out to be wrong for the backtest and right for the half of the work where I was still figuring out what I was building. That tradeoff is not something I picked on purpose at the start. It is something I learned later, once I had tried to run backtests at non-trivial size and watched them take long enough that the only honest response was to move the production-shaped parts of the work to a different stack. The library stayed where it was still earning its place, which was everything around the backtest — loading data, slicing it, plotting an indicator against a window of OHLC in a browser tab, iterating on a formula until it matched what the textbook said it should.

The shape

A hundred-ish standalone pure functions — moving averages, oscillators, bands, volatility indicators, statistics, chart transforms, a PRNG — each in its own file, each short enough to read in one sitting. The smallest moving-average implementation is 9 lines of JavaScript. RSI is 12. The whole library has one devDependency (esbuild, for the bundles) and zero runtime dependencies.

It distributes three ways: the npm package, an IIFE bundle hosted at unpkg that you can drop into an HTML page with a <script src="https://unpkg.com/ta.js/ta.iife.min.js"> and call as ta.sma(...) against a browser global, and an ESM bundle from the same host for named imports. The IIFE path is the one I still find most useful. Any HTML file becomes a charting harness for an indicator idea without a build step, which is the mode I was actually in most of the time.

The one piece the library has that is not pure JavaScript is a Monte Carlo sim written on top of Node’s worker_threads. It is loaded at runtime only if Node ≥ 12 is detected; browsers get a stub for it instead. The gate is a single if at the bottom of the entry module, which reads the Node version off process.versions.node and either mounts the worker or does not. That conditional is the only environment-check the library has.

Why JavaScript

JavaScript was the language I was fastest in at the time and it was the language that gave me the visualisation half of the workflow for free. An HTML page with a <canvas> and a <script src="..."> is a charting harness with no build step, no virtual environment, no toolchain. I could load a CSV in the browser, pass a slice of it to an indicator, and watch the output draw itself onto a canvas in the same tab I had the data open in. For the part of the work I was actually doing most days — “does this formula produce a line that looks like the textbook version” — that loop was fast enough that I never once thought about replacing it. The CDN distribution was wired up on 2020-09-14, three weeks into the project. Visualisation-from-day-one was the earliest architectural decision the library made. There is also a small React companion at github.com/Bitvested/ta.web that wraps the library for chart use in a React app; it is not the canonical way to use ta.js for visualisation — the IIFE drop-in is — but it exists if you are already in a React codebase and want the same indicator output piped into a charting component.

The alternatives the library would be benchmarked against today are Python with NumPy or pandas, a C++ or Rust backtester, or a hosted product that runs the backtest for you. Any of those would have been a better choice for the production-shaped work. None of them would have given me the in-browser visualisation path without more plumbing than was worth it for a first open-source project, and that was the part of the work I was actually doing. The cost of picking JavaScript was that the backtest story was going to hit a ceiling I had not anticipated. I hit it, shrugged, and moved production-shaped work off the library. The library stayed where it was still earning its place.

Array in, array out

The API shape across all hundred-ish functions is the same: take an array, return an array. ta.sma(prices, 14) eats an array of numbers and returns an array of SMAs. Indicators that need OHLC take an array of two-element or four-element arrays. The whole contract is “pass me the slice of history you want, get back the result for that slice.”

The alternative I did not build was a streaming API — an indicator instance you push prices into one at a time and that emits the next value on each push, caching its internal state. That would have been the right design for live-tick consumption, where the expensive thing is the state keep-alive across ticks rather than the math itself. I skipped it because it did not match how I was actually using the library. I was loading a CSV, slicing it, handing the slice to an indicator, and plotting the result. A stateful API would have been extra surface for a use case I did not have.

The cost of the array-in shape is that the library is structurally ill-suited to live ticks. Every call re-walks the window; there is no incremental computation. For anyone trying to use it in a live loop, everything about online updates and feed integration is left to the caller. That is the price of a research-shaped API for a research-shaped use case.

Flat for five and a half years

For almost the entire lifetime of this project the source layout was one flat entry module with every indicator and every utility registered against a single object. That was a deliberate choice. Flat is trivially greppable. Flat is trivially overrideable — ta.ema = myEma and every other indicator that calls ta.ema internally picks up the override on its next call. Flat is trivially copy-pasteable into a <script> tag when you want to prototype something new without publishing it first. The flat layout matched the way the library was being used more than a folder structure would have.

That held up until this week. The last commit before this weeks commit was 2024-07-22, so the project had been dormant for roughly 22 months before the refactor landed. The refactor itself was a single two-hour push: the first PR split every file under a per-category directory structure, the second replaced the hand-rolled bundle with an esbuild pipeline. The new layout is legible in a way the flat one was not, but the override pattern was load-bearing enough that giving it up was not an option. The fix is a shared indirection: a four-line module that exports an empty object, which the entry module populates at load time and which every per-function file reads from when it needs to call another indicator. The function files look like this now:

const ta = require('../_registry.js');

function sma(data, length=14) {

for(var i = length, sma = []; i <= data.length; i++) {

var avg = ta.sum(data.slice(i-length,i));

sma.push(avg / length);

}

return sma;

}

module.exports = sma;

The registry module is:

module.exports = {};

Consumer overrides still propagate the same way they always did — the entry module populates the registry object at load time, so a monkey-patch like ta.sma = myOverride is read by every dependent indicator on its next call. The refactor would not have been worth shipping without that piece. v1.17.0 went out the same afternoon as both PRs, with no soak window between the refactor and the release. If something in the new layout is wrong in a way I have not yet seen, it is going to surface from the people using the package, not from me.

Open source as a scrutiny loop

I did not publish this library because I thought the world needed another TA library. I published it because I wanted the functions I had written to be looked at by people who knew the math better than I did. If I was going to get the definition of MFI wrong or the standard formula for the stochastic K wrong, I would rather find out from an issue on GitHub than from a sim that silently diverged from the market six months later.

That loop actually closed. Issues have come in — not a flood, but enough to correct implementations I would not have corrected on my own, and enough to point me at things I did not know about when I wrote the first version. The open-source posture did what I wanted it to do: turned the library’s correctness story from “I believe these are right” into “other people have looked at these and told me when they are not.” The cost side of this is the one most open-source maintainers know about — PRs to triage, expectations from users I had not signed up for — but for a hundred-function indicator library that never tried to grow past its original scope, that cost has stayed manageable.

The tests are a regression guard, not a correctness story

The tests are a flat assert.deepEqual loop over a fixtures file. No framework, no assertion library, no setup. Twenty-three lines of test runner, one expected-output fixture per indicator, and a try/catch per fixture that logs to console.error on a mismatch and moves on. The key line looks like this:

try {

assert.deepEqual(ta[x].apply(null, parameters[x].in), parameters[x].out);

} catch(e) {

console.error('Test failed @ ' + x + '\n');

}

What this checks is “the output of this indicator on this fixture has not changed since the last time I checked the fixture in.” What it does not check is “the output is correct.” The fixture was computed from the implementation at the time I wrote it. If the implementation was wrong then, the fixture is wrong now, and the test is asserting that the wrong output has stayed stably wrong. That is a real caveat. Adversarial test design would have caught some of the bugs that instead landed in my inbox as issues.

The second honest caveat about the test setup is that the runner exits 0 even when a fixture fails. Failures go to console.error and the process still returns success. CI cannot fail the build on a regression without grepping stderr for the failure banner, which I never wired up. That is a bug in the runner, not the tests, but it is a bug I have not fixed because for a library at this shape it has not yet cost me anything I noticed.

The rest of the honest list

Things I know are imperfect and have not fixed, in the same register:

-

There is a hand-maintained minified bundle that predates the

esbuildpipeline and still ships with the package. It is a third source of truth alongside the twoesbuild-generated bundles, with no automated regenerator, and it can drift silently from the source tree if I forget to regenerate it on a given release. This weeks work modernised the other two bundles; this one is still on me. -

The README and the EXAMPLES file both carry the anchor list of every indicator in the library. Adding one indicator is three coordinated edits — the indicator file, the README entry, the EXAMPLES entry. That is enough friction to quietly discourage contributions from people who would otherwise have sent one.

-

The Monte Carlo

simworker calls back into the main module by name rather than by reference —ta.sum,ta.sma,ta.std,ta.normsinv— which means a rename of any of those functions silently breaks the simulation. There is no type contract to catch it. The tests do not exercisesimend-to-end either, so a broken call would surface only when a user ran a simulation and got a wrong answer. -

JavaScript itself is too slow for a production-grade backtest at any serious scale. I have written this up front already, but it belongs in the honest list too, because anyone using the library on a non-trivial window of history is going to run into it and it is not something the library can fix without ceasing to be a JavaScript library.

I have not been in a rush to clean any of these up. This weeks release modernised the distribution pipeline because that is the part of the project the people still using it actually touch. The rough edges above are edges I have lived with since 2020 and they have not yet cost me an issue I could not absorb.

Where it sits now

I use this library less than I did years ago. That is worth saying up front, because it shapes the rest of the answer. The production-shaped indicator work lives on a different stack now. ta.js is still the instrument I reach for when I want to prototype a formula in a browser tab or hand somebody a <script src="..."> and show them what an indicator actually is. That is a narrower role than the one it started in, but it is still a real role, and the people who are still using it are using it for something close to the same shape of work.

This weeks release was not about reopening the project for active feature development. It was about bringing the internals up to date for the people who are still using it — a cleaner source layout, a modern bundle pipeline, an override mechanism that survives the refactor. I have agentic coding tools helping me proofread and tighten the pieces I could not have tightened on my own in a reasonable two hours. What I have not committed to is a list of new functions to write. I am not sure yet whether there are many left that are worth adding to a library at this scope.

If a new round of function work does open up on it, the thing I most want to preserve is the original posture: small, copy-pastable indicator functions, one per file, short enough to read in one sitting. When I wrote the first version in 2020 I could not find a library that fit the use case I had — most of what was available felt bloated, with more surface than function — and the minimalist shape of what I ended up publishing was a direct reaction to that. Whatever comes next still has to pass the “can I drop one of these into a <script> tag and have it make sense on its own” test. If it does not, I would rather not add it.

Related reading

-

A diamond painting shop the code could not save

I built a custom Node webshop for personalized diamond paintings, sold one canvas, and shut it down inside a month. The supplier was the bottleneck.

-

An agent built around not calling the LLM

A personal agent built so the default question every tick is whether the model needs to be called, and an architecture where the answer is usually no.

-

A research pipeline for the internet-shaped part of the decision

I built a 5-stage pipeline over 177K Dutch e-commerce domains to pick a niche. V1 picked one. The shop died in operations the pipeline couldn't see.