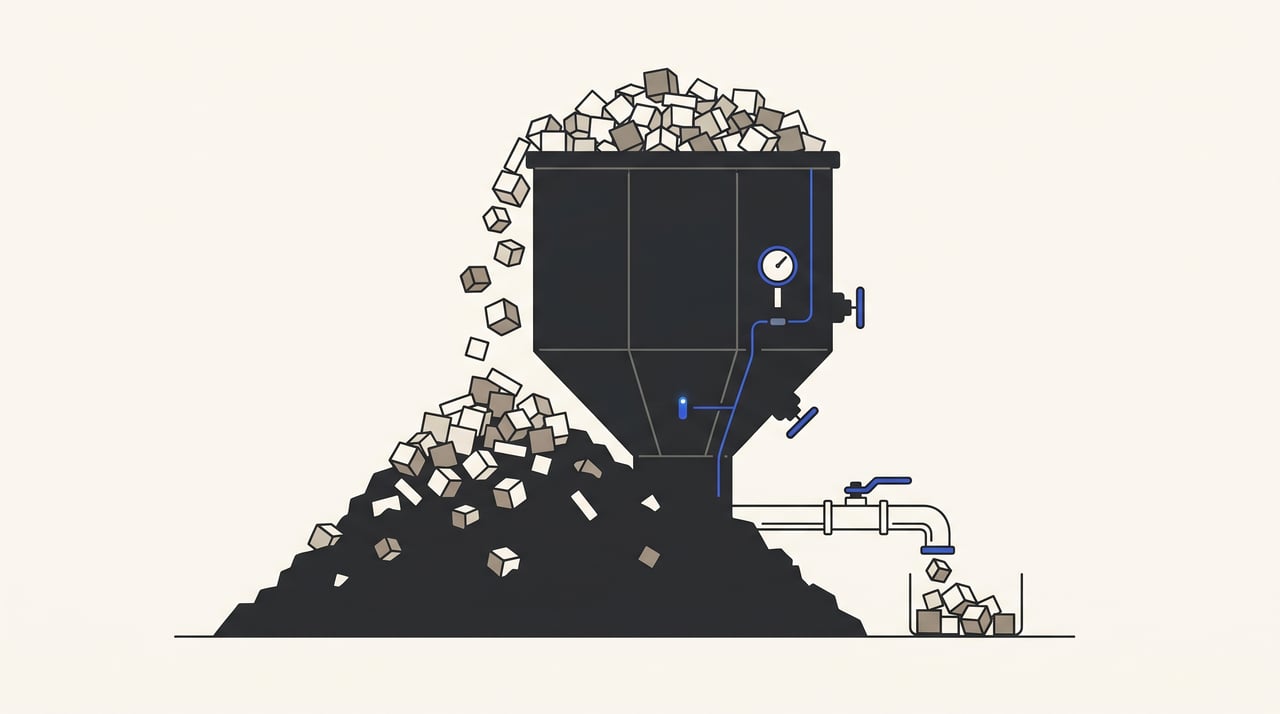

Magento's _cl tables had 902 million rows

For more than six months the WorkWear4All storefront went offline every night for a few minutes. I knew it was happening. The agency that built the platform knew. Our hosting provider knew. Three external Magento integrators we asked at various points over that stretch knew. None of them could tell me why, and I could not justify the time to keep digging — there was always something more visible on the queue, the outage was small enough to absorb, and the partial fix we already had in place was just stable enough to remove the urgency.

The actual cause was a query that took thirty seconds to write against information_schema. I should have run it the first month.

Magento 2 is not built for a catalog of the size we run. At 800K+ products, with every product touched daily for stock and price synchronisation from suppliers, the indexer cannot drain the _cl changelog tables faster than the platform writes to them, and the storefront eventually pays for that mismatch — in our case with a brief outage every night when something in the indexing pipeline gave up, restarted, and caught back up. The platform itself does not signal that this is happening. There is no warning in the admin UI, no indexer status that flips red before the symptom shows up at the storefront, no log line that points at the root cause. And the fix — once you find it — is documented in at least three public places, none of which surface when you ask a Magento expert to look at the symptom.

That is the part of the story I want to write up. Not “I found a clever fix” — the fix is well-known if you go looking from the operation side. The thing worth naming is that the answer exists, no expert I asked could retrieve it, and that is a failure mode of the platform’s ecosystem worth writing about honestly.

The shape of the install

I have written about the WorkWear4All stack in the introduction post on this site — multi-store Magento 2 across half a dozen European domains (workwear4all.nl, workwear4all.be, workwear4all.de, workwear4all.com, workwear4all.pl and several more specialised sub-stores), a shared backend running on Apache + Varnish + PHP-FPM + Redis. The piece that matters for this story is the catalog scale and the update pattern.

The catalog is 800K+ products, a mix of simple and configurable, sourced through suppliers’ daily exports. Every product gets touched once a day for stock and price sync from the supplier feeds. That is at least 800K product updates per day at the source level, and because Magento’s catalog indexers are subscribed to product changes — price, category-product, catalogsearch_fulltext, stock, the list goes on — each of those source updates fans out into a _cl row for every subscribed indexer. The per-day write volume into the changelog tables runs into the millions.

The most-pinned indexer was always catalogsearch_fulltext. It was the one that fell behind first, fell behind hardest, and was at the top of the indexer status whenever something went wrong. We are on Magento 2.4.7, which is the minor release immediately before the 2.4.8 default flip to Update by Schedule — so the schedule-mode behaviour described below is something we opted into, not something we inherited as a default.

What we tried first, and why the partial fix was the worst possible outcome

Before the actual fix, we tried the obvious thing. We installed Advanced Index Manager by Magefine, which batches the indexing process so it does not try to chew through the entire changelog in a single tick. It made the indexer more stable. The outages got shorter and less frequent. They did not stop entirely.

That partial fix is the moment I want to flag. Once the storefront was up enough to stop being a daily fire, the pressure to find the actual cause lifted. The Magefine module bought us six months of “stable enough that the outage isn’t blocking anything urgent” — which sounds like a good outcome and is in retrospect the worst possible outcome, because removing the urgency removes the time-budget for the deeper investigation that would have actually solved the problem. We did not stop trying. It just kept losing to the next fire.

I knew, six months in, that I would not find this quickly. I had been working with Magento 2 for about that long, and I knew enough to know that diagnosing something this far down the indexer pipeline was going to take significant time I did not have. The agency that built the platform had not found it. Our hosting provider, who has Magento experience of their own, had not found it. The three external integrators we wrote to over that stretch had not found it either. The conversations all ended the same way: someone took a look, could not put their finger on it, and moved on.

Looking back at it now, the diagnostic was just looking hard at the database for an anomaly. I am still not sure why nobody — including me, for six months — did that.

What was actually happening

The fix is operationally simple once you see it.

Magento 2’s “Update by Schedule” indexing mode is built around materialised view changelogs. Every indexer subscribes to a set of source tables; whenever a row in a source table changes, an entry is written to a corresponding _cl table for each subscribed indexer. The indexer cron reads new entries from each _cl table on a tick, processes them, and clears them. The whole thing is supposed to keep itself in equilibrium: writes flow into the changelog, the cron drains them, the indexes stay fresh.

The model assumes the cron can keep up. With 800K+ products updated daily, against the half-dozen indexers that subscribe to product changes, the per-day write volume into the changelog tables runs into the millions. The indexer cron, which processes entries sequentially through PHP, cannot drain that fast on the hardware we have it sitting on. The tables grow. They keep growing. Every day’s catalog import adds another layer of backlog the cron will not catch up on, because tomorrow’s import is already coming.

What happens when a _cl table grows past whatever specific threshold its corresponding indexer can no longer cope with — too much memory consumed by the in-flight batch, a query timeout, a lock that never releases, an indexer process that the OOM killer takes out — is that the indexer eventually fails, restarts, and tries to catch up from where it left off. While that catch-up is happening the storefront is briefly inconsistent or unresponsive, depending on which indexer fell over. In our case the most-pinned was always catalogsearch_fulltext, which is also the indexer where a stall is most visible to a customer because it gates the storefront’s product search.

The diagnostic I eventually ran was a single query against information_schema listing every _cl table by row count, sorted descending. The largest changelog tables came back at around 902 million rows — a number from memory, since I did not save the original output and the tables have long since been truncated. The shape was unmistakable: the indexer cron was not draining; it was being lapped, every day, by the next day’s catalog import.

The cron, and what it does and does not fix

The standard index cron group is not running on this server. The schedule-mode incremental processing it would do — reading from _cl tables, draining them, clearing them — is the thing that does not keep up with our update volume in the first place; the cron group has been disabled, and a nightly full reindex from source is doing the work that the incremental drain was supposed to do. That nightly run is the cron I want to walk through.

It performs three operations, in this order:

- Full reindex —

bin/magento indexer:reindexrebuilds every index directly from the source tables, bypassing the changelog mechanism entirely. - Truncate every

_cltable — once the reindex has produced authoritative indexes, the accumulated changelog entries are obsolete and the space they were taking up is dead weight. - Reset every

mview_statecursor —UPDATE mview_state SET version_id = 0for every row. This is the step Max Chadwick’s warning post is specifically about; without it, post-truncate changelog tracking silently breaks.

The order matters. Doing the reindex first means that if the reindex itself fails — out-of-memory, lock contention, a query timeout — the accumulated changelog entries are still in place and the schedule-mode mechanism (such as it works) still has its baseline state to fall back on. If we truncated first and the reindex failed, we would be left with empty changelog tables and indexes in the broken pre-cron state, which is strictly worse than where we started. Sequencing the destructive operations after the reindex turns a partial failure into a silent no-op rather than an outage.

Stripped of the internal wrapper I run it through, the operations look like this:

#!/usr/bin/env bash

set -euo pipefail

# Run from the Magento root, with DB credentials configured (e.g. via

# ~/.my.cnf or environment) so the mysql calls below pick them up.

# 1. Full reindex — rebuild every index directly from source.

php bin/magento indexer:reindex

# 2. Truncate every changelog table; the freshly rebuilt indexes have

# made the accumulated changelog entries irrelevant.

TABLES=$(mysql -N -e "

SELECT TABLE_NAME

FROM information_schema.tables

WHERE table_schema = DATABASE()

AND TABLE_NAME REGEXP '_cl\$';

")

for t in $TABLES; do

mysql -e "TRUNCATE TABLE \`$t\`;"

done

# 3. Reset every mview_state cursor to zero. This is the step Chadwick's

# warning is about — without it, post-truncate changelog tracking

# silently breaks.

mysql -e "UPDATE mview_state SET version_id = 0;"

This runs nightly. The storefront has been stable since it rolled out, and even after about a week of normal operation the largest _cl table sits at around 9 million rows — roughly a hundredth of the 902 million we found at the moment of discovery. A few side effects also cleared up around the same period — most notably a recurring problem we were having with price updates not propagating through to the storefront, where the price indexer would silently fall behind and customers would see stale prices. That stopped happening too. I cannot fully attribute it to the cron — it might have been the original schedule-mode mechanism falling behind, it might have been related to the Chadwick failure mode before the version_id reset was in place, it might have been an unrelated coincidence with a server or SQL restart — but it has not come back since the current setup has been running.

I want to be honest about what this fix is. It is not a root-cause fix. The root cause is that Magento 2’s indexer architecture was not designed for a catalog this large with this update pattern. The cron buys time by treating each night as a clean slate; the underlying mismatch between the platform’s indexing model and our operational reality is unchanged. If the catalog continues to grow or the daily update volume goes up further, this stops working too. The cron is a workaround.

There is also a real warning to read alongside this fix. Max Chadwick has a post titled “Don’t TRUNCATE the _cl tables” that argues — correctly — that naively truncating the changelog tables without resetting version_id in mview_state puts the install in a state where changelog-based indexing silently breaks, manifesting as updates that never reach the storefront. The cron above does the version_id reset explicitly, as step three; the reset is the part that closes Chadwick’s specific failure mode. A reader copying the truncate step without the reset is exposing themselves to the exact bug Chadwick is warning about.

There is one operational tradeoff worth being explicit about, beyond the “it is a workaround” framing above. With the standard incremental indexer cron group disabled and a nightly full reindex doing all the work, indexes are not being kept fresh during the day — they catch up overnight. That means there is a known staleness window of up to twenty-four hours for any catalog change made between reindex runs. For our update pattern, where supplier feeds run on a daily cadence and the storefront’s tolerance for slightly-stale category and search results is generous, that is an acceptable cost. For a shop where the catalog needs to reflect changes in minutes — flash sales, time-limited inventory, anything where intra-day freshness matters — this setup would not work; you would need either to keep the incremental cron group running and find some other way to handle the changelog volume, or rebuild more often than once a night.

The honest take: I am still unsure if this is the exact right way to go about it. It works and uptime improved drastically. That sentence is the real edge of my understanding, and the post is more useful with it on the page than without it.

Why none of the experts found it

I want to come back to the social part of this story, because it is the part that generalises beyond this specific bug.

Five separate parties looked at the problem before I solved it. The agency that built the platform. Our hosting provider. Three external Magento integrators. None of them proposed the changelog-table angle. The fix is not obscure — when I went back and checked after the fact, it surfaces in the first page of search results for “magento truncate _cl tables.” Max Chadwick wrote about it (warning against it, actually). The mgt-commerce knowledge base has a step-by-step tutorial that walks through almost exactly the cron I built. The Magento GitHub repository has an open issue (#29789) acknowledging the problem upstream. There is even a Packagist module that wraps the fix into an extension. The fix is documented.

It is just unreachable from the symptom side. Search for “magento storefront down at night” or “magento intermittent outage” or “magento indexer stuck” and the changelog-table angle does not surface. The expert you ask for help cannot get there from your symptom either, because their mental model starts from the same set of search terms yours does. The retrievability collapses at the symptom layer, not at the documentation layer. The answer is sitting in plain sight under a search term you have not thought to try, and the experts have not thought to try it for you because it is not the term they would associate with what you described.

The lesson I take from this — and the one I would have done differently if I could replay the six months — is to spend less time asking experts and more time looking at what the platform actually has in front of it. The query against information_schema was thirty seconds of work. The Magefine module, which I installed instead, was a real expense and a partial fix that bought six months of complacency. Looking at the database first would have saved most of the elapsed time.

This is going to get more common, not less. Magento 2.4.8 ships with a default change that flips all new indexers to Update by Schedule out of the box, which is the mode where this whole pattern bites. More shops are about to live a version of this story.

Where this sits now

The cron is the operating mode. There is no migration timeline. The storefront has been stable, the indexer has been keeping up, and the fix is doing its job.

Long-term, I think the platform is the wrong fit for our use case and we are going to design something else around it. The shape I am thinking about is an A/B-style cutover — start the new system on one of the smaller storefronts, prove it under live traffic, and only then think about migrating the larger ones. Our internal tooling already has the catalog data sitting in a MongoDB backing store, so the data layer for the new system is in place; the storefront and the surrounding operational pieces are not. There are no firm plans yet.

The specifics of the replacement architecture are not something I am ready to put on the record. What I will say publicly is what I have learned to design out: an indexer pattern that materialises a generic flexibility-first data model into a set of denormalised indexes through a cron that has to drain a changelog. That entire shape — the EAV-to-indexer pipeline that defines Magento 2’s catalog architecture — is the thing the cron in this post is patching around, and it is the thing I do not want to inherit into anything new. What I will design in is a separate post once there is something running to point at, not before. I would rather write up the second attempt once it has survived contact with reality than commit to specifics in advance.

The part I keep coming back to

The principle I keep coming back to on this is that a partial fix that removes the urgency is worse than no fix at all. The Magefine module was a real piece of engineering, it did real work, and it made the storefront more stable than it had been. It also bought us six months of “this is fine,” and during those six months I stopped looking at the actual problem because the actual problem had stopped costing me sleep. The next fire always wins against the smouldering one, and the partial fix is what turns the fire into a smoulder.

I do not have a clean rule that prevents this in the next system. The closest I can get is: when something has been tolerable for months but you have not actually understood what it is, treat the tolerance itself as the warning sign. The cron in this post should have existed five months earlier than it did. The reason it did not is that nothing was on fire enough for me to make it.

Related reading

-

A diamond painting shop the code could not save

I built a custom Node webshop for personalized diamond paintings, sold one canvas, and shut it down inside a month. The supplier was the bottleneck.

-

The adoption gap

I changed my mind about agentic coding loops. Most of my work this year was built next to one — and the honest read on what it lifts and what it leaves behind.

-

An agent built around not calling the LLM

A personal agent built so the default question every tick is whether the model needs to be called, and an architecture where the answer is usually no.